National Laboratory of Pattern Recognition

Institute of Automation, Chinese Academy of Sciences

3D Human-Computer Interaction

(Supproted by Nokia Research Center Europe)

![]()

Background

The project goal is to develop practical methods for accurate, real-time self-localization of a mobile camera and mapping of 3D environment (VSLAM). There are many potential applications for VSLAM, such as augmented and mixed reality, nature UI, games and so on. Although there are many methods for VSLAM, the efficiency and robustness and VSLAM methods for large-scale outdoor environment still need largely improved. Our goal is to develop the efficient, fast, robust methods for 3D-tracking on the mobile devices.

3D Human-Computer Interaction is an important application for VSLAM. Interacting with virtual 3D environments in a natural and error-free manner often requires the ability to track body movements or user-defined objects. It leads to natural user interface.

Some Results

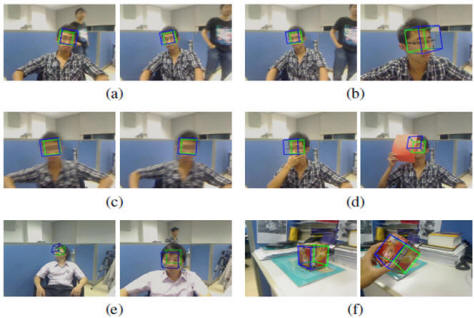

A vision-based tracking method that can re-construct and track a rigid object with unknown 3D structure in everyday environment. Fig. 1 shows the tracking results under different conditions, such as dynamic background (a), scale change (b), fast motion (d), partial occlusion (d), tracking on different people (e) and tracking on a generic object (f).The use of a single webcam makes the setup of the interaction environment extremely simple and generic object in everyday environment can be tracked and used for interaction.

As a concrete example, an interactive 3D scene displaying system which applies the proposed tracking method is demonstrated, the system adapts the rendered scene according to the tracked viewers head position and orientation. In our system, the recovered 6-DOF head pose allows users to easily expand the field of view by rotating their heads. Fig. 2 shows a typical scenario of interactive 3D scene displaying.

Fig.1 Tracking results

Fig2. The rendered images of a virtual 3D scene changing according to the head pose